-

Automatically backport commits using git

For those who just want the result please scroll to the bottom, otherwise you can read the story.

I started looking into how to backport commits since I know how easily git forward ports commits and figured there had to be a better way then the tricks and manual methods recommended in documentation and various results from google. My original plan was to make a plugin that would re-roll patches that need forward porting automatically on drupal.org and if possible backport as well. Over the course of an hour and half I found a variety of snippets that got me part of the way there, developed a basic idea of how to accomplish backporting in git, and slowly refined it to built-in commands. Huge thanks to cbreak and FauxFaux in #git on freenode!

The basic idea I started from was to simply remove all the commits between the commit you want to patch on top of and the last commit in the tree. To test my approach I started by creating a simple situation representing 7.x -> 8.x using the following.

$ git checkout -b test 8.x $ git mv core/CHANGELOG.txt core/CHANGELOG2.txt $ git commit -m "move" $ echo "hello world" > core/CHANGELOG2.txt $ git commit -am "edit"I then proceeded to remove the 2nd to last commit (“move”) to see if the last commit (“edit”) would be updated to edit core/CHANGELOG.txt instead of core/CHANGELOG2.txt.

$ git rebase -i HEAD ~3 # commented out the "move" commit and saved $ git showTo my delight the “edit” commit was indeed updated.

The next step was to figure out how to remove all the commits between the one to be backported and the last point at which 7.x and 8.x “last overlap” or at the point 8.x was branched (those are not necessarily the same and in Drupal’s case they are not). I first envisioned dumping git rebase -i to a file, editing with script to remove commits and then rebasing. To that end it was suggested to change the GIT_EDITOR environment variable to a grep command and then use git rebase -i which would work, but the better approach is to use –onto which does exactly that.

$ git rebase --onto=A BIn simplest terms: remove all commits between A and B non-inclusively.

Now I simply needed a way to find the best point to merge onto. There are several snippets in stackexchange and elsewhere that find the “oldest ancestor” which would work, but a) are not optimal, and b) require long snippets that are not easy to understand. When I dug around in #git I was pointed to git merge-base which finds the best point for such an operation.

$ git merge-base 7.x 8.xFinds the last common commit between the 7.x and 8.x branches. Using this we get the following.

$ git rebase --onto=`git merge-base 7.x 8.x` HEAD~1Backport the latest commit onto the merge-base for 7.x and 8.x. Finally, we just need to forward port the patch to the end of the 7.x branch which is easy.

$ git rebase 7.xResult

So putting it all together we get the following powerful one-liner that will backport the last commit in the 8.x branch to the 7.x branch.

$ git rebase --onto=`git merge-base 7.x 8.x` HEAD~1 && git rebase 7.xIf you want to backport something other than the last commit simply checkout a branch with that commit as the HEAD.

$ git checkout -b backport-branch commit-to-backportThis can also be extended to backport a patch straight on drupal.org by downloading and committing the patch first.

$ your facorite download method (wget, curl, etc) $ git apply some.patch $ git commit -am "patch to backport" $ git rebase --onto=`git merge-base 7.x 8.x` HEAD~1 && git rebase 7.x $ git show > some.patchYou can of course use a fancier git format-patch and what not, but this gets the point across. Enjoy!

-

Code coverage reports

Today, I made public a whole bunch of commits I made while improving the Code coverage project for use with ReviewDriven. You may remember the screenshot I included in Part 2: Breathing new life into the testbot which is where this is coming from. Thanks to the improvements the module now actually works and is much more efficient, polished, accurate, and much more. With that in mind I am proud to announce the first stable release of the project targeted at Drupal 7.14.

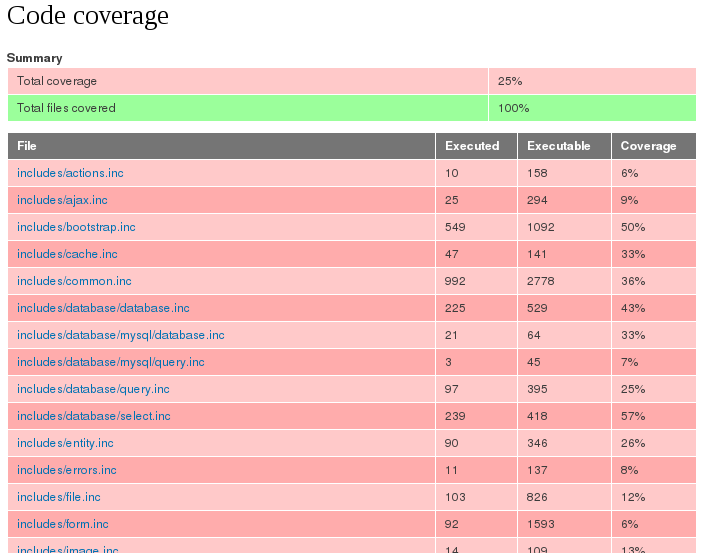

Using the module you can get coverage reports for individual page executions or any combination of tests. Code coverage reports can be extremely useful in determining areas of code that are not tested at all or provide a snapshot of the code involved in generating a page.

Pages

Code coverage can be recorded by adding

?code_coverage=trueto the end of a page URL. After the page has completed execution a linked will be placed at the bottom of the HTML which will display outside the page style.

The link will open the coverage report generated for that page request. The report will include all the files that where loaded during the execution of the page.

Tests

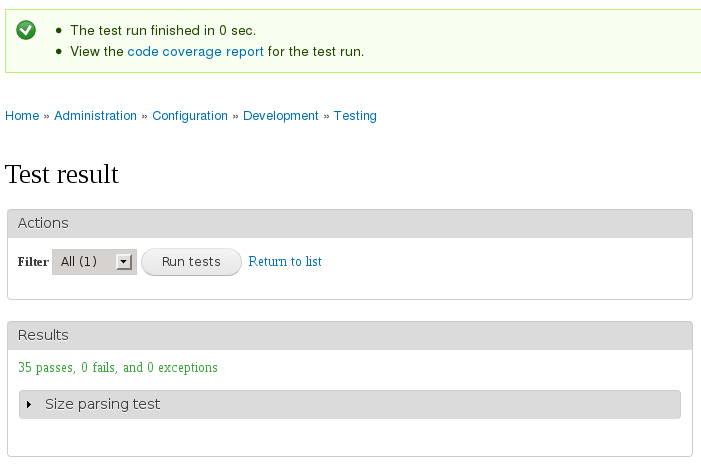

Similarly, a link is provided for the code coverage recorded during a test run.

Reports

The report includes two parts: 1) the summary or index, and 2) the line-by-line coverage information. The links described above point to the summary of the coverage information.

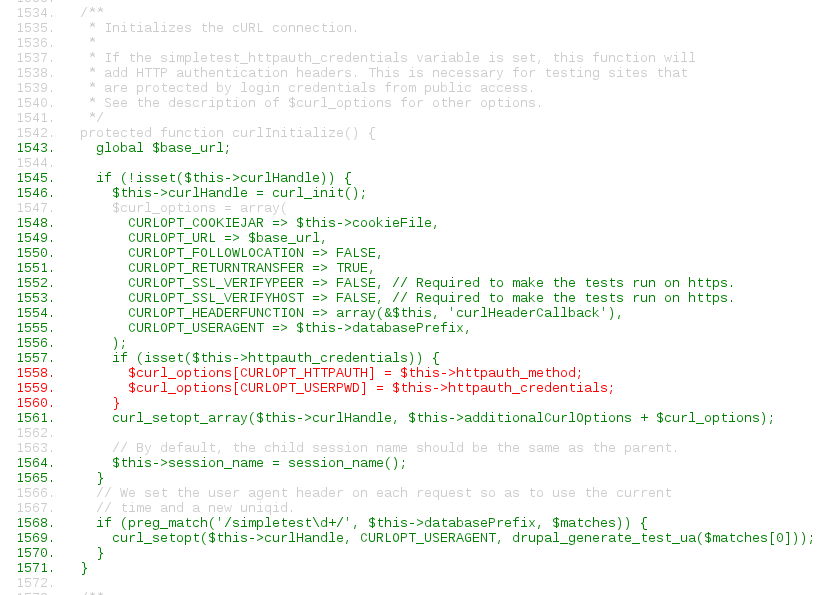

The links in the summary point to the line-by-line coverage information overlayed on the corresponding code. The colors indicate the following.

- green = executed

- red = not executed

- gray = ignored (or non-executable)

Filters

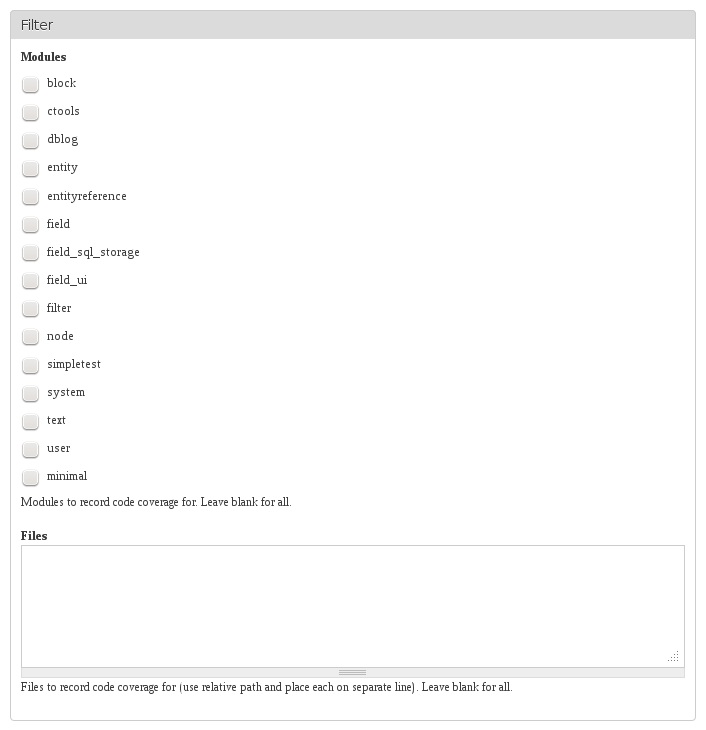

The coverage scope may be filtered to focus on improving coverage for a particular module/file/directory. Reducing the scope will also improve the coverage recording performance which may be useful when when dealing with large tests.

Future

I have already integrated the Code coverage project with Conduit (the open source ReviewDriven platform) which will be replacing the current system running qa.drupal.org. The plan is to get the new platform up and running in parallel with the current system at which time regular coverage runs against core (and contrib projects) can be made publicly available.

-

Part 3: Testing battle plan

I have been meaning to make this post for quite some time. I have posted pieces before and had many discussions, but I have yet to write it out formally. Moshe’s recent post about Upal motivated me to finally write the post.

Background

The SimpleTest code for mimicking browser behavior, specifically the form handling, has required a fair amount of upkeep, improvement, and generally wasted resources that could be better applied elsewhere. I attempted to clean things up with the external browser component, but that effort ended up dying. Overtime I became more and more convinced that we should revisit the basis for our testing system and try to rebuild things on an existing framework.

You may be wondering why we ever decided to create our own framework in the first place, which is indeed a good question. Back before we had a testing framework in core the concern with adding the SimpleTest.org library to core was its size. At the time SimpleTest was not ready to depend on PHP 5 and specifically SimpleXML. It soon became clear that we could develop our own internal browser using a combination of PHP 5 tools that ended up being MUCH smaller then SimpleTest.org’s implementation. This process was led by the poor assumption that we should commit the testing framework to Drupal core. In hindsight that was probably not the best idea.

Rather then commit a third-party library to core it should simply be included using a build script (like many other projects) and Drupal has a system tailored to do exactly that, Drush make. We could even use this approach for jQuery instead of committing the entire library into the core repository. Drupal.org already supports invoking Drush make scripts during release building so the general public wouldn’t even notice the difference.

In addition to the size problem is the issue of bandwidth, which myself and many others have discussed many times before. Keeping the Drupal testing integration (with testing library) in contrib would allow it to be maintained and more easily developed while removing an unnecessary burden from Drupal core.

Combination of tools

It is great to see Moshe’s post and plan for building atop PHPUnit. Using PHPUnit definitely seems like a great start as it provides integration with many other tools, a familiar API, and using drush removes the “two Drupal sites” problem, but PHPUnit doesn’t replace the browser component nor provide a JavaScript testing platform. At this point it seems prudent to build the functional tests atop Selenium which allows the tests to be written in PHP while elevating the need for the custom browser component and allowing for JavaScript testing.

I was part of the initial effort to get QUnit into core along with cwgordon7. We had a working version integrated with the test runner, but things were derailed for various reasons which has since spawned the QUnit project on Drupal.org.

Yeah, ideally we use PHPUnit and QUnit for PHP/JS unit testing, respectively, and Selenium for functional testing. Those are each the best tools for the job.

Selenium has also received some love in the form of SimpleTest integration project on Drupal.org. We have the makings of a great testing setup, but we need to put the pieces together.

Battle plan

Moving forward it seems prudent to continue maintaining each of the pieces in their respective locations and outside of core.

- Selenium project needs to refactor to build on Upal

- Rebuild DrupalWebTestCase as a compatibility layer on top of Selenium

- Integrate QUnit project with Upal in a similiar fashion to Selenium

- Provide PHPUnit test output parser for testbot

- Provide a drush make script for testing in core or in a central hub repository in contrib

- Inventory and refactor Drupal 8 tests to use new system while removing duplicity and waste

Lets make this happen!

-

Part 2: Breathing new life into the testbot

The purpose of this post is to describe the solution that, after careful consideration, seems best suited to alleviating the situation described in the previous post. Other solutions may exist that we have not considered and that will effectively solve the problem. We are open to discussing alternatives and welcome constructive comments on our proposal. At the same time, we discourage negative comments that do not offer a positive alternative. As it is clear the current situation needs improvement, simply dismissing our proposal without offering a better alternative is not useful.

Proposal

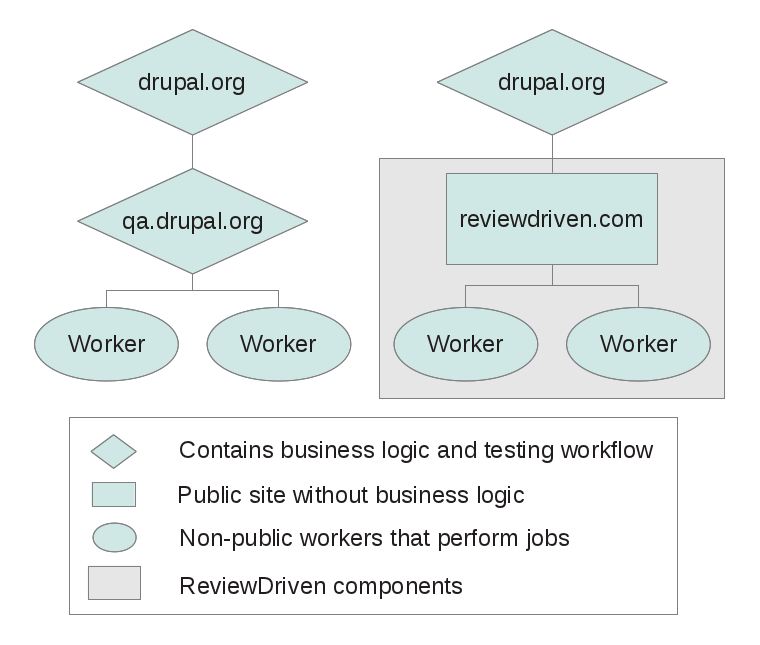

ReviewDriven (RD) is a distributed quality assurance platform built to provide a simple yet powerful interface that makes it easy to apply the best practices of continuous integration, test driven development, and automated quality reviews to your development life cycle. The ReviewDriven stack provides a completely rebuilt system designed to take advantage of Drupal 7 and contributed modules that will allow Drupal.org, Drupal development shops, and site owners to take advantage of automated quality assurance. In Drupal terms, RD is the next generation of the testbot (qa.drupal.org).

We would like to see DO and other interested parties take advantage of automated QA tools. Towards that end, we propose that DO engage RD to assume the role of the testbot and provide those same services to the Drupal community.

Advantages

One of the limitations of the current system and one of the primary concerns we addressed with RD is the lack of control over the testing workflow. For example, the current workflow settings apply globally instead of on a more granular basis. In contrast, the RD platform will allow Drupal.org full control to define the workflow and settings to be used with each review. The integration between the testbot (RD) and Drupal.org will continue to be maintained as an open source module which will allow anyone to contribute ideas and changes to the QA workflow on Drupal.org. Since the ReviewDriven stack provides a versioned API, the Drupal.org integration may be maintained and updated independent of RD and on it’s own schedule. This approach leaves all the control and flexibility in the hands of the Drupal community and shifts the burden of the testbot to RD.

Other challenges faced by the current system were also taken into consideration when building ReviewDriven. The ReviewDriven stack is extremely flexible which in itself solves a number of the current issues and opens up a variety of new options. This opens up the possibility of reviews for things like Coder and code coverage.

The RD stack as a whole is much more maintainable since it is built on as many contributed modules as possible. This keeps the actual codebase much smaller then the current system (PIFR). Depending on contributed modules has led us to suggest and contribute a number of improvements to those modules, and to create other contributed modules. This also implies the system is easily maintainable by the Drupal community in the event we open source the code (since the majority of the code is already maintained by others). The RD architecture is analogous to any other Drupal site (sponsored by a good citizen) in that we are maintaining the code specific to our site while contributing back to the community modifications to existing modules and new features designed as generic modules.

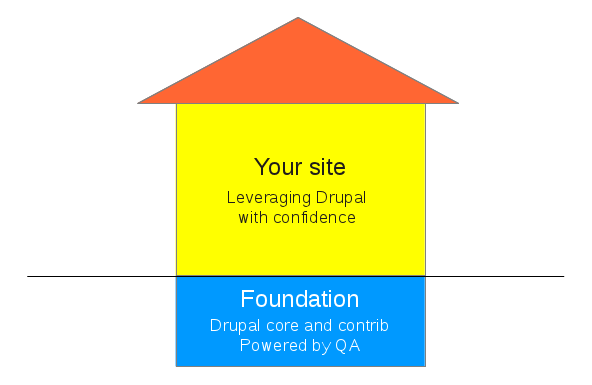

Putting QA in context

Our proposal hinges on the fact that the testbot (and most of Drupal.org) provides a direct benefit to everyone but, just like roads and other infrastructure, the cost needs to be shared. Core and contributed modules provide a direct benefit in an indirect way by reducing the amount of and time spent writing custom code, ensuring the base system works as intended, and allowing you to leverage with confidence that code base while building your site. Core and contributed modules represent the sure foundation upon which you build your house.

There has been plenty of discussion about the important role the testing infrastructure and testing as a whole played in Drupal 7 development. The benefit of QA is also evident by the fact that a number of very large Drupal sites launched on Drupal 7 before it was officially released. The stability and dependability offered by quality assurance testing is something everyone wants.

Drupal.org is one of very few open source projects, much less projects in general, to adopt quality assurance and testing. The Drupal core development process requires new features to have tests and bug fixes to include tests. This workflow is encouraged for contributed projects and has been adopted by many of the more used projects among others. Not only does this help ensure the stability and quality of Drupal and its contributed projects, but in turn serves as a selling point and differentiator for Drupal adoption. Given that QA is both an adoption point and a vital tool for improving Drupal, does it not follow that it makes sense to provide funding for a full-time effort towards its improvement?

Just as many Linux developers are full-time employees paid to work on improving Linux, we seek to work full-time on improving the Drupal ecosystem through quality assurance. We are not the first to be funded full-time to work on Drupal or to be paid to improve Drupal.org. A perfect example is Angie Byron (webchick) who was hired by Acquia to work full time on Drupal. Just as Linux was started by hobbyists and grew into a profession, so too Drupal appears to have outgown the ability to be maintained entirely by volunteers.

Funding

We see two separate areas that need funding. The first focuses on taking advantage of the ReviewDriven platform by updating the Drupal.org integration with the new testbot (RD). The second area is the ongoing fee for use of the platform (which includes infrastructure costs). RD will use the ongoing fees to improve and maintain the platform (like any other business).

Harnessing the flexibility provided by ReviewDriven will require a large overhaul of the current Drupal.org QA integration module (PIFT). We envision Drupal taking advantage of the granular settings supported by RD to provide per project, per release, per issue, and per patch settings to control the reviews made. Granular settings will ensure that the various workflows, coding standards, and environments that exist in “contrib” can be handled properly. Many projects have different requirements or adhere to different standards between their various releases. The integration with the new testbot would remain open source as Drupal.org integration and can be funded just like any other Drupal.org project.

We would also like to see a QA status advertised on each project page, possibly even some sort of ranking based on a number of quality assurance metrics. These metrics would help people select between similarly featured modules, advertise that we do QA, and help motivate developers to adopt QA. We have many other ideas for improvements and anticipate suggestions from the community.

The ongoing service also requires ongoing funding to handle infrastructure costs, feature improvements, updates for Drupal core changes, and requests from the Drupal community would ensure that things do not stagnate. We have a vision for new features that would significantly improve the Drupal ecosystem, some of which we have discussed with a few community members.

We envision either the Drupal Association or a group of businesses and other organizations with an interest in Drupal to hire RD as the logical successor to the current testbot. Our business will be to develop and maintain the testbot for use by Drupal.org and other organizations. The same approach can then be applied to other critical peices of infrastructure such as the improvement of Drupal.org and its maintenance. We would like to pioneer this effort for Drupal to further enhance the process and tools available to Drupal contributors and the community.

Further details about the specifics of the arrangement, details of the improvements, and plans for the future can be found in our formal proposal and addendum.

What to expect during the transition

With both the short-term upgrades and improvements to the Drupal.org integration and the ongoing RD services funded, we see the transition to RD taking shape as follows.

The first stage of the transition will require the update of Drupal.org’s integration with the testbot to provide basic connectivity with ReviewDriven. Supporting RD will require a number of changes, both user-facing and behind the scenes. In addition, just using the ReviewDriven platform will enable a number of features and workflows.

Once the initial integration is ready to be tested in production, we suggest both ReviewDriven and the predecessor be run in parallel. Running both systems in parallel will provide the community with a preview of what is to come and an opportunity for feedback. Results from the two systems can be compared to provide a final round of human checks and give people time to adjust to the new system. After the completion of the parallel phase the old testbot will be deactivated and the new system will be given priority.

The second phase involves the larger changes necessary to take advantage of ReviewDriven’s features and flexibility. We will start discussions and work on this phase as the initial integration stabilizes.

Some of the exciting features we will expose to DO in one or both of the stages include:

- Much improved turnaround time with the ability to scale as the test suite grows

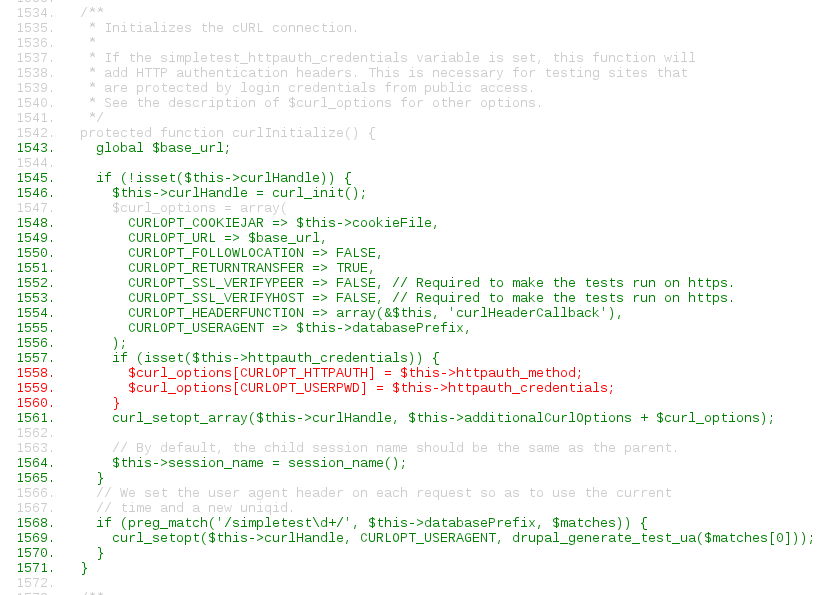

- Code coverage reports from test runs [picture]

- Testing of sandboxes (both core forks and modules)

- Support for the developer application process

- Drush make scripts for retrieving third party libraries.

- Drush make files in lieu of parsing the project dependencies

- Execution of arbitrary commands during various stages of the worker processing

- Automatic enabling of issue retesting

- Completely automated site reviews (reviews run against a configured site)

- Reroll of a patch (using git --rebase) on one issue after a commit on another issue (for example, this large core change)

- Display of quality metrics

- Visible branch test results

- Forcing a patch to run when a branch is broken (in order to fix the branch)

- Determine the disruption to other patches that would be caused by another patch (e.g. the patch to move all core files)

- Run Selenium tests

- Provide separation between the testbot and the Drupal installer by writing a special script maintained by the community

Example of code coverage during a test run: green = executed, red = not executed, gray = ignored (or non-executable).

Conclusion

We look forward to feedback about our proposal and encourage you to voice your opinion. Please be sure to be constructive. In case its not obvious, we are extremely passionate about doing this. So let’s make this happen.

-

Part 1: The woes of the testbot

The intent of this series of posts is not to blame people, but rather to point out the testbot needs full-time attention. Integral to this story are the decisions and circumstances that led me to stop working on SimpleTest in core and the “testbot” which runs on qa.drupal.org. I intend to follow-up this post with others dealing with rejuvenation of the testbot and improvements to SimpleTest. I understand some will not agree with my position, but I would like everyone to understand my reasons and intentions, and how we find ourselves in the current state of affairs. After everything is out in the open, my hope is that a useful discussion will ensue and meaningful progress will result.

Factors

Four factors led me to stop working on SimpleTest in core and the testbot:

- I no longer had gratuitious amounts of free time.

- I now had a need to make a living (and working on the testbot does not generate any income).

- The core development process being what it is led to burnout and lack of desire.

- The request to stop working on the testbot in conjunction with the Drupal 7 code freeze.

With me out of the picture, it magnified the fact that noone else worked on the testbot and, going forward, noone stepped up to take my place.

Background

Lets start off with some background about my involvement with the Drupal testing story.

SimpleTest's journey to core

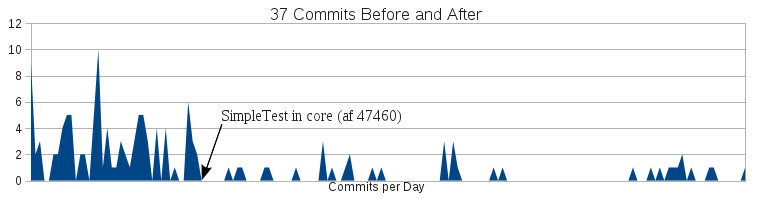

Rewind the clock back to early 2008. I had gotten involved in Drupal through GHOP and became maintainer of SimpleTest. I proceeded to perform a large-scale refactoring and cleaning up of SimpleTest. This, combined with other community efforts, resulted in SimpleTest being added to Drupal 7 core during the Paris Coding Sprint. The rapid pace at which I was able to develop SimpleTest quickly slowed as I no longer had the ability to commit changes nor make design decisions. Instead, even the most trivial changes took days or weeks to get committed. In spite of these additional challenges, I continued to diligently work on SimpleTest in core. To my dismay I discovered on multiple occasions that large changes were virtually impossible to push through the core queue, and I spent countless hours rerolling patches and refactoring code at various developers’ whims. In the end, the patches simply died, but not for lack of quality or merit.

The chart shows 37 commits to the SimpleTest project before and after it was added to Drupal core. It is clear the pace of development slowed immediately and lessened further with time.

Changing course I focused on small changes to SimpleTest in core, but ran into similar throughput issues. For all intents and purposes, my ability to make contributions to SimpleTest had ground to a halt. This led me to write a blog post detailing the problem and possible solutions. I was not alone in my conclusions and many would still like to see the problem resolved. I continued to contribute to core now and then, but I was completely burned out. I even took month long breaks from Drupal as it literally burned me out to try to make any contribution to core. My burnout was not caused by overwork but was due to frustration with the exaggerated length of time to accomplish a minor commit.

Following up SimpleTest with the testbot

On a parallel track, getting SimpleTest into core turned out to be only half of the battle. Actually seeing the tests adopted and maintained remained a challenge. I led the charge to keep the tests in sync (initially doing it almost alone). The effort to create an automated system for running the tests had been underway for quite some time, but lacked the necessary volunteers and commitment to really get it off the ground. I was then asked to take over the project at which point I evaluated its status and decided to start over. I created PIFR, a plan for realizing the goal, and proceeded to rapidly make progress. Testing.drupal.org launched shortly afterward and testing became an integral part of the Drupal core workflow.

With a working system I then laid plans for a second iteration of the testbot with a number of improvements. After heavy development the second generation of the testing system was launched with a massively improved feature set.

Seeking sponsors

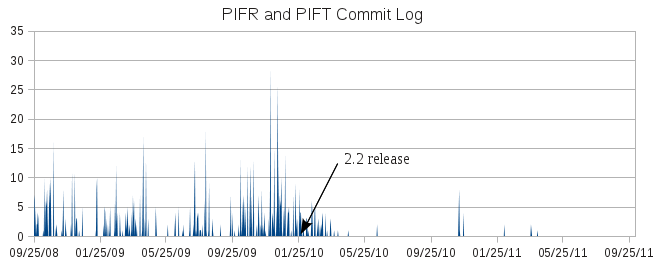

After graduation from high school I was no longer financially able to devote large portions of my time to the testing system or core development so I sought sponsors to enable me to continue my work. Acquia provided an internship that allowed me to focus on testing again. After successfully completing the internship I found a job with Examiner.com that allowed me to spend a portion of my time improving and maintaining the automated testing system and roll out the initial work for contributed project testing and a number of other improvements in ATS (PIFR and PIFT) 2.2. The contributed project testing with dependencies was labeled beta because it did not support specific versions and had known issues. The plan was to make a followup release to solve the issues.

Code freeze and the request to stop

After deploying PIFR 2.2, I was asked to stop making changes to the testbot to ensure stability of the testing system during the final stages of Drupal 7 development. I continued to make improvements that I planned to deploy once the freeze was lifted, but the short freeze turned into months and more months. This delay ultimately forced me to stop development before the codebase diverged too much from the active testbot.

The chart shows my combined commit activity for PIFR and PIFT and indicates the dramatic slowdown that occurred as a result of the freeze placed on the testbot.

During this time I was the only person who worked on the testbot in any significant capacity (or virtually at all). My availability for working on testing dwindled when my time with Examiner ended. This, combined with the stagnation forced upon the testbot, meant things simply ceased moving forward. The complete stagnation is seen in the long period of time between the 2.2 release and the 2.3 release of PIFR on January 28, 2010 and March 28, 2011, respectively. During that entire period of more than a year no changes were made to the testbot. When changes were finally made, they were done merely out of necessity to accommodate the git migration.

Post-freeze undeployed features

Shortly after the 2.2 release I completed a number of improvements before things came to a stand-still. Some of the recent deployments have included functionality that I had completed, most notably:

- Version control system abstraction and plugins for bazaar, cvs, git, and svn

- Coder reviews in addition to testing

- Beta support for contributed project testing with dependencies

Recent changes

As mentioned above, I had already abstracted the version control handling in the testbot and had four plugins (bazaar, cvs, git, and svn). Unfortunately, there were a number of assumptions that had to be made due to limitations with the project module’s VCS integration. These assumptions had to be updated for the shiny new version control API. The changes required were very minor and did not represent any feature improvements, but were simply part of the changes necessary to complete the git migration. Randy Fay made the necessary changes and the testbot saw its first update in a very long time. A few small followups were released as part of the planned phasing out of the old patch format and such. It is interesting to note the other major components of the Drupal.org migration were contracted by the Drupal Association except the automated testing system.

Jeremy Thorson has recently been working on using the testbot’s ability to perform coder reviews to help solve the woefully broken project application process which he describes in several blog posts. Again we see change coming to the testbot out of necessity rather than a focused plan for improvement. For those not aware of it, the project application queue has several hundred applications and it takes months to even receive a review. Jeremy has worked hard on improving the application process, at the heart of which is the ability to perform automated coder reviews. Providing automated reviews has been held back on multiple fronts not the least of which is finding people to get things done. This is a definite hurdle considering that only three people have every worked on the testbot code itself not to mention there is an average of less than one active maintainer at any give time.

As mentioned above, I had deployed the first stage of contributed project testing over a year ago, but was forced to shelve the follow-up deployments. The code to properly handle module dependencies fell into disarray with the git migration and required refactoring to work with the version control API. Derek Wright and I spent a lot of time hashing out the details to ensure things were properly abstracted for the project module. I completed the code, but it was never committed and thus was not maintained through the migration. Randy took it upon himself to update the code, but deviated from the agreed upon design. This choice meant the code would not be included in the project module and has a number of other ramifications. The feature was rebuilt in a drupal.org specific manner that precludes others from taking advantage of the code and eliminates the possibility of exposing the data through the update XML information. Exposing the data in that fashion would mean projects like drush, drush make, Aegir and others could discard code written to recreate this data or would now be able to support proper dependency handling. In addition, the recent deployment of dependency handling has led to large delays and instability in the testbot.

Conclusion

The decision to freeze the testbot in conjunction with the Drupal 7 code freeze made sense at the time. However, the extended freeze of the testbot (due to the extended Drupal 7 code freeze) along with moving SimpleTest into core had the unintended and disappointing side effect of causing the effective stagnation of the testing system. The only changes to the testbot in the past 20 months have been made out of necessity and annoyance (the git migration and the unfinished testbot integration with the project application process for new developers). During my tenure with Examiner.com, a fair number of changes were made to the testing system but not deployed on drupal.org. The module dependency code had been written over a year ago and finalized shortly thereafter but languished and was never deployed. Recently, some of these changes were finally deployed along with the git migration. All the while, I had set forth a detailed roadmap for the testing system.

The testing system had been stable and running for 3 years. Recent changes (implemented by others) have resulted in the ups and downs of the testing system. The importance of testing to Drupal development coupled with the recent instability strongly suggests the testing system requires full-time attention. The lack of feature changes since the 2.2 release of PIFR in January, 2010 is a direct result of a lack of financial testing resources, the lock-down of the testing system components, the burnout caused by extreme difficulty to make changes, and the extended freeze placed on the testbot.

Various solutions were tried to enable the continuation of work on the testbot. None represented a viable long-term solution. In the end, my father and I decided the solution was to establish a business to advance testing for the Drupal community and to create an environment where we no longer have our hands tied behind our back. In the next post, I will share the vision and passion we have for testing along with several features that could be made available to the community immediately.