-

Firefox userContent.css for drupal.org issue queue

After seeing a reference to http://userstyles.org/styles/11133 by tha_sun in IRC I went ahead and played with it a bit. I ended up with a very simple version that that just removes the blocks and makes the issue table full width. While I was at it I customized Google.

If you do not know where to put this checkout, per-site custom CSS in Firefox.

@namespace url('http://www.w3.org/1999/xhtml'); @-moz-document url-prefix('http://drupal.org/project/issues/') { #spacer {} #contentwrapper { background: none !important; } #main { margin-left: 5px; margin-right: 5px; } #threecol, #content, #squeeze { width: 100% !important; margin: 0 !important; padding: 0 !important; } .sidebar .block { display: none !important; } } @-moz-document url('http://www.google.com/') { #spacer {} #ghead, td[align='left'], td[width='25%'], #body > center > font, input[name='btnG'], input[name='btnI'], #footer { display: none; } #main > center { margin-top: 250px; } } -

Acquia internship

This Summer I will be working part-time as an intern for Acquia. I am very excited to be working with Acquia and having the chance to spend more time improving things that I have interest in. To clarify I will be working on projects that benefit the entire Drupal community. The items I will be working on are improvements to projects I have either started or that I am heavily involved with.

During the discussion of the internship I came up with the following goals that were then prioritized by Dries.

Primary goals

- Finalize testing of contributed modules and Drupal 6.x projects/core.

- Add executive summary of test results on project page.

- Extend the SimpleTest framework so we can test the installer and update/upgrade system.

- Improve and organize SimpleTest documentation

- Work on general enhancement of Drupal 7 SimpleTest.

Secondary goals

- Provide on-demand patch testing environment for human review of patches.

- Finish refactoring of SimpleTest to allow for a clean implementation of "configuration" testing.

- Analyze current test quality and code coverage, and foster work in areas requiring attention.

I will post updates on some of the more interesting items as they are accomplished. Additionally, I would like to give a special thanks to Kieran Lal for his mentoring and help in finding me sponsorship.

FOLLOW UP: To clarify I will still be participating in Google Summer of Code 2009, which was explicit in my agreement with Acquia. Follow up post by Dries.

-

Vacation Summer '09

I will be on vacation starting tomorrow morning (Sunday - 5/9/09) for about a week. See everyone on the other side.

-

Automated Testing System - Statistics

I decided to pull gather some statistics about the automated testing system. These statistics were collected on Wednesday, May 6, 2009 at 4:00 AM GMT. Automatic generation of these statistics along with analysis is a feature I have in mind for ATS 2.0. I appreciate donations to the chipin (right), as this project requires a lot of development time.

From the data you can see that the test slaves have been running tests for the equivalent of 200 days. The system has been running for 192 days and not all the data was included since some of it is inaccurate. That means the system has saved 200 days of developer’s time! It is clear that the ATS is a vital part of test driven development. Additionally the time that would have been spent fixing regressions and new bugs has been drastically lowered.

Item Function Value Time testing SUM 17,310,047 seconds (~288,500 minutes, ~200 days) Test run (test suite) COUNT 42,351 MAX 3620 seconds (~60 minutes) MIN 17 seconds AVG 804 seconds (~ 13 minutes) STDDEV_POP 783 seconds (~13 minutes) Test (patch, times tested) COUNT 6,953 MAX 86 MIN 1 AVG 10 STDDEV_POP 15 Test pass count MAX 11,453 MIN 0 AVG 4,265 STDDEV_POP 4,910 Test fail count MAX 6,989 MIN 0 AVG 9 STDDEV_POP 155 Test exception count MAX 813,795 MIN 0 AVG 160 STDDEV_POP 9,893 One item you may notice is the maximum test exception count of 813,795. The patch that caused that many exceptions proved that our system is scalable! The patch is much appreciated. :)

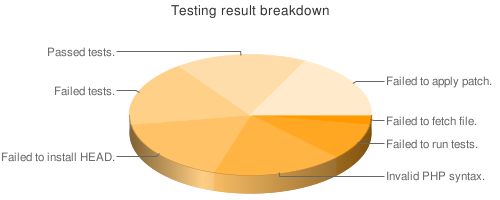

Saved the current test result breakdown.

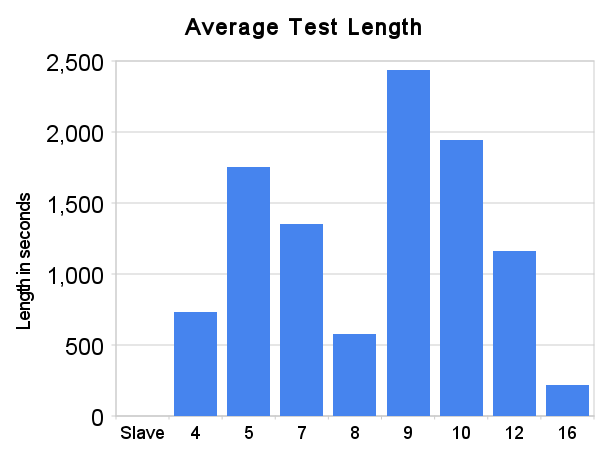

The average test run length for all the active test slaves can be seen below. This data is only looking at the latest test run for each patch in the system.

Test slave Average test length* 4 730 seconds 5 1,753 seconds 7 1,352 seconds 8 576 seconds 9 2,438 seconds 10 1,942 seconds 12 1,161 seconds 16 217 seconds * Excludes test runs that do not pass initial checks and fail before running test suite.

-

Automated Testing System 2.0 - New Features - Part 1

Over the next few weeks I plan to make a number of posts about the new features provided by ATS 2.0 and the benefits to the community. Currently, the system is in the final stages of deployment, but is not yet active. Please be aware that these features will be available once ATS 2.0 has been deployed. I appreciate donations to the chipin (right), as this project requires a lot of development time.

Server management

One of the major restraints holding back the expansion of the system has been the need to manually oversee the array of testing servers. The new system contains a number of enhancements to make it not only easier to manage the network, but also automates the task of adding new clients.

The most important addition that makes all this possible is the automatic client testing. Clients are automatically tested to ensure they are functioning properly. This is done through a set of tests that are sent to each test client with an expected result. The results the client sends back and compared with the expected result and that information is used to determine if the client is functioning properly. Clients are tested on a regular basis to ensure that they continue functioning as expected.

Another helpful change has been re-working the underlying architecture to use a pull based protocol instead of a push based protocol. This alleviates the issues caused when a client is unreachable for a period of time, or is removed without notice.

Public server queue

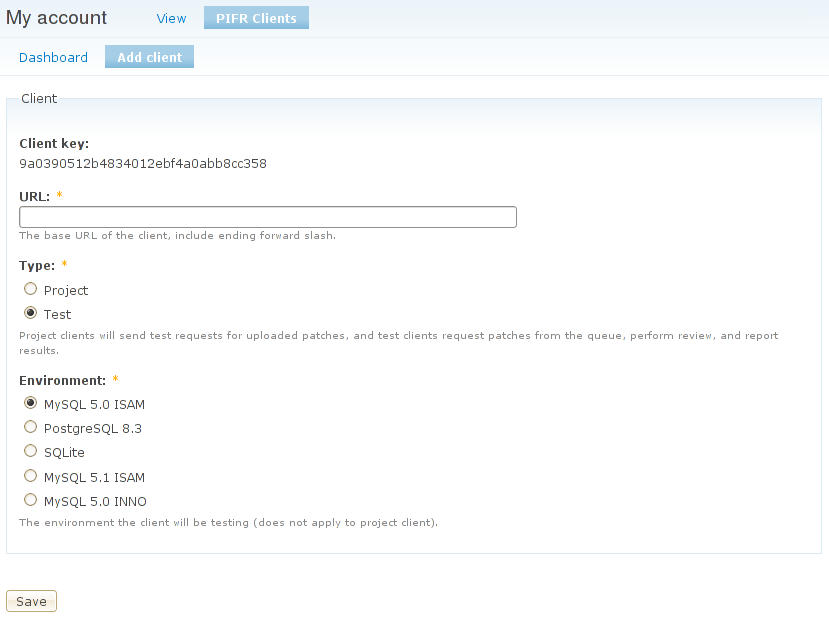

Another improvement that will facility a larger testing network is the public server queue. Allowing anyone to add a server to the network is possible since the clients are automatically tested as described above.

The interface has been designed so that users may control the set of machines that they have added to the network. The system automatically assigns the client a key that must be stored on the client and is used for authentication. The process of adding a client to the master list is very simple and should provide an easy way for users to donate servers.

If the system detects any issues with the client down the line, such as becoming out of date, it will notify the server administrator of the problem and disable the test client. The system will continually re-test the client and re-enable it automatically if it passes inspection. Alternatively, the server administrator may request the client to be tests immediately after fixing the issue.

Multiple database support

The new system has been abstracted to allow for the support of PostgreSQL and SQLite in addition to MySQL. This is vital to ensure that the Drupal 7 properly supports all three databases. Just as patches are not committed until they pass all the tests, patches will not be committed until after passing all the test on all three databases (5 environments with the database variations).